What is audio normalization is a question many often ask. Almost always another question arises from that one being should I normalize my audio. Well, these are questions we are going to answer.

Audio normalization has been around since the dawn of audio production and has become somewhat of a controversial topic. The goal of this article is to clear up any preconceived notions you may have about it. We want you to be able to make an educated decision on whether or not to use it.

In this article, we will discuss what audio normalization is, does normalizing audio affect quality, how I use audio normalization, and should you normalize your audio. With that being said, lets first look at what audio normalization is.

TABLE OF CONTENTS

What Is Audio Normalization?

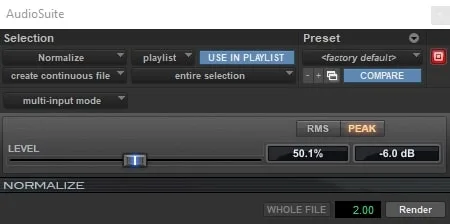

Audio normalization is the process of increasing the amplitude of a recording by a constant amount of gain to reach a target decibel level. The overall dynamics and signal-to-noise ratio stay the same since the amount of gain applied is constant. This process is typically done within a digital audio workstation (daw). There are two types of audio normalization; peak and loudness normalization. Lets first discuss peak.

Peak Normalization

Peak normalization looks at the highest level of signal present in a recording and uses it as the reference point. For example, if the loudest part in the song is -6 decibels, then everything will be brought up by 6 dB. This is assuming your target level is 0 dB. If you wanted to normalize all the individual tracks in a session then this is the type of normalization you would use. Peak is probably the most popular method of audio normalization.

Loudness Normalization

Loudness normalization is based on the overall loudness measurement of a recording. The volume of a recording is adjusted to bring the overall gain to a specified target level. This can be looked at through several different measurements such as RMS, but in today's industry LUFS has become the standard. Loudness normalization happens during the audio mastering process sometimes without any thought that it's actually happening.

One of the most popular places to see loudness normalization is in audio streaming platforms. For example, Spotify uses -14 LUFS as their standard. If they receive a song that is at -10 LUFS then they will turn it down 4 dB. They do this so that when you listen to songs from different artist you don't have to constantly change the volume on your playback device. With playlist formats being the most popular this is a must.

The biggest problem with streaming platforms today is that they can't agree on a standard loudness normalization level. This makes it tough for audio mastering engineers and why many choose to ignore them all. Hopefully in the near future an agreement will come about.

Does Normalizing Audio Affect Quality?

When talking about what is audio normalization we must discuss does normalizing audio affect quality? The short answer is no. If you do peak normalization the dynamic range of your song or track will stay in order. You are also not increasing any noise in the recording in regards to signal. Loudness normalization also doesn't have a negative effect on the recording in the streaming world.

My recommendation is that if you are sending your tracks off to a mix engineer, ask them what they want you to do with your tracks. They are the professional and they will do what is best for you and your music. Some may chose to normalize your tracks and some may not. There is no standard workflow to audio success.

How I Like To Use Audio Normalization

My favorite way to use audio normalization is get all the tracks within my session to the same peak level. The reason I do this is because audio plugins are meant to react to specific levels and these levels are what I set out to achieve. Plugin sweet spots are different but are mostly within a similar range.

My overall goal is to get all my tracks to a peak level of -6 dB. This level works great for the initial plugin in my chain which is the Slate Digital Virtual Tape Machine. This plugin uses a VU meter for referencing and recommends you shoot for hitting 0 on it.

With all my tracks peak normalized at -6 dB I know that my gain staging is setup properly and I'm ready to mix. Gain staging is one of the most important techniques in mixing and it must be done right. Please use my tip above to help get better mixes!

Should You Normalize Audio?

Based upon my previous recommendation on how to normalize audio I would say you definitely should! Now, you don't have to use peak normalization to achieve proper gain staging. You can also do clip gain adjustments. The problem with this method is that it takes way longer and as we know, time is money! Peak normalization is super fast, easy, and effective.

Frequently Asked Questions (FAQs)

What is audio normalization and how does it work?

Should you normalize your audio tracks before mixing?

What is the difference between peak normalization and loudness normalization?

Does streaming normalization mean you should normalize your masters before uploading them?

Can normalization damage audio quality, and are there situations where you should avoid it?

Final Thoughts

I hope through reading this article you understand what is audio normalization. It is an important process both in the studio and in the streaming world. Whether you decide to use it or not, the process is there waiting for you. If you have any further questions, feel free to reach out to us here at Audio Sorcerer. Also, check out our other great content on audio recording, mixing, and mastering. Peace out!

"Some of the links within this article are affiliate links. These links are from various companies such as Amazon. This means if you click on any of these links and purchase the item or service, I will receive an affiliate commission. This is at no cost to you and the money gets invested back into Audio Sorcerer LLC."